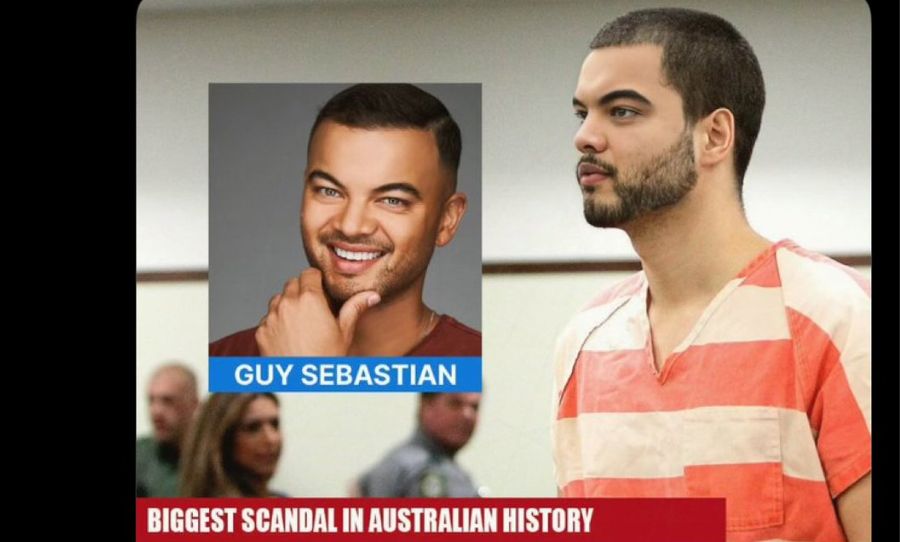

Deepfakes have been causing quite a stir throughout the world wide web lately. With the power to sway political elections, crumble careers and manipulate the views of the general public it’s no surprise people are wary of their potential.

Deepfakes have the ability to create fake news and malicious hoaxes. They manipulate relationships and pose a serious threat to posterity. The increase in accessibility has led to a boom in prevalence across sectors like social media, cinema, politics and porn.

Today we look at the dangers of deepfakes and the consequences of having widespread fabricated truths.

Deepfakes are already everywhere we go. From the cinema to politics to porn, deepfakes are becoming incredibly convincing and increasingly malicious.

Deepfakes in Cinema

Deepfakes may sound like the stuff of fantasy but they are very real, very convincing and very scary. In fact you have probably already been fooled by some very impressive deepfakes in cinema.

2016s epic space-opera Star Wars: Rogue One resurrected Peter Crushing for a performance from beyond the grave. With the aid of advanced CGI, an eerily lifelike Cushing reprised his role as the sneering Imperial Officer Grand Moff Tarkin from the 1977 original Star Wars: A New Hope, even though the actor has been dead for 20 years.

There’s even a terribly unnerving video of Remi Malek being turned into Freddie Mercury to emphasise the potential for recreating people in cinema. It’s so lifelike that you can hardly discern the difference between fake and reality. One need only let their imagination off its tether for a moment to grasp the potential of this technology.

2020 Election

With the US 2020 election fast approaching voters are on the edge of the seats studying polls and campaigns to form outcome predictions. However, deepfakes have already been used by the Trump administration in attempts to demonise the the opposition and sway voters.

Technically referred to as a ‘shallowfake‘, there was controversy recently surrounding a doctored video of President Trump’s confrontation with CNN reporter Jim Acosta at a press conference. The clip clearly shows a female White House intern attempting to take the microphone from Acosta, but subsequent editing made it look like the CNN reporter attacked the intern.

The clip being shared by the WH Press Secretary and Infowars has been slowed down then sped up to create the illusion of a karate chop.

Original video here: https://t.co/8LNcAOKjXd

— Aymann Ismail (@aymanndotcom) November 8, 2018

This subtle manipulation of the truth proves that doctored videos don’t have to be deep to be dangerous. Thus the law is cracking down.

California has just passed a law, Assembly Bill 730, which criminalises the creation and distribution of video content (as well as still images and audio) that are faked to pass off as genuine footage of politicians. AB730 makes it a crime to do this, with an exception for news media, within 60 days of an election.

In May, a video of Democratic politician Nancy Pelosi was doctored to make her appear drunk and inhibited while speaking at a conference. Pelosi is a popular target for Republicans and alt-right advocates as she is the highest ranking elected woman in United States history. The original video was slowed down by 75% while her voice was pitch altered. The clip was then spread virally on social media and Pelosi received significant backlash and criticism.

The disarming notion underlying all these conversations is the potential prevalence of undetected deepfakes. The doctored videos that don’t hit the headlines. These are the ones subtly swaying public opinions and their count could be innumerable.

Deepfakes in pornography

Despite the threat to polls and electorates by far the most dangerous deepfake avenue is that of pornography. Henry Ajder, head of research analysis at Deeptrace said “a lot of people are forgetting that deepfake pornography is a very real, very current phenomenon that is harming a lot of women.”

It’s a weaponised assault of women which says, in the most literal sense, you have no right over your body. Celebrities like Emma Watson, Natalie Dormer, Gal Gadot and Natalie Portman have already been victimised by have their faces digitally rendered onto convincing pornographic images and videos.

This is an extremely damaging patriarchal belittlement of an entire gender, all for hollow titillation. I believe this is the new frontier of deepfakes that is truly disturbing. Anyone in the public spotlight is in danger of this invasion and it is almost exclusively targeting women.

In attempt to combat this various laws have been passed around the world to criminalise revenge porn. The Enhancing Online Safety (Non-consensual Sharing of Intimate Images) Bill was passed in August 2018, which means individuals could face civil penalties of up to $105,000 and corporations of up to $525,000 if they do not remove an image when requested to by the eSafety commissioner.

In fact the first person to be charged under revenge porn laws has occurred in Perth, WA. A young man took pictures of his 24 year old girlfriend without her consent or knowledge and uploaded seven to a fake Instagram account under her name. He could face up to 18 months in prison.

While this isn’t explicitly deepfake related it is a step in the right direction to see these laws been passed and enacted in order to combat revenge porn.

The consequences

We have already seen the result of deepfakes with the viral prevalence of ‘fake news’. This disinformation creates deliberate falsehoods and presents facts under the guise of truth. This technology can make it look like anyone, saying anything, at any point in time.

This will inevitably lead to an increasingly dangerous and fallible online landscape. As the technology improves and becomes more readily accessible, truth as we know it will almost cease to exist on then net. But whose to say where truth exists online nowadays anyway.

If you’re concerned about deepfakes invest in ZeroFox. They are a trusted IT company that formed in 2013, and are solely dedicated to deepfake detection and cyber security.